Train like we fight, fight like we train. Currently, the Air Force is exploring the use of Virtual Reality (VR), however, this does not incorporate the use of a real aircraft. A solution to this problem may be to use portions of a synthetically generated environment embedded into one’s vision of a real world environment. Embedding portions of the virtual world onto a pilot’s visor, whilst airborne, would be more advantageous in producing higher quality training. The solution to do this is to use Augmented Reality (AR). This new vector towards the airborne environment could enable a paradigm shift in aviation training.

As an Air Force recognised Elite Sportsperson (Pilot) for the sport of Reno Air Racing in the jet class, I have often wondered how we can practice safely in the absence of another training partner. Then it occurred to me: “Why do we even need another physical aircraft at all? What if there was some way of superimposing another aircraft onto our helmet visors so we have a synthetically generated training partner?” I then thought about how the ADF could benefit and came up with this blog.

The predominant approach to the ADF’s pilot training, across all platforms, is actually flying the real aircraft and conducting all exercises in it. However, it is expensive in terms of dollars, manpower, time, effort and resources. To provide such training requires an enormous budget, lots of assets and people to achieve the required outcome.

Currently, the Air Force is exploring the use of Virtual Reality (VR) only, for training using no real aircraft and this can be sufficient for learning some skills. However, for certain skills, it may be better to place the Virtual World in the actual real aircraft’s cockpit as this would be more advantageous in producing higher quality training. The solution to do this is to use Augmented Reality (AR).

What’s the difference between VR and AR?

VR can be best described as an artificial digital environment that completely replaces the real environment where a person is submerged in a digitally created world. Audio-visual sensory input, and in some cases, even other sensory stimulants are completely created by a digital device and delivered, in most cases, to the person via a headset.

AR, on the other hand, is quite popular these days, but many people are not able to make the distinction between AR and VR. The main difference is that AR is ‘layered’ on top of the real world, where it can have many shapes and forms. The most common ones are videos, images, and other interactive data types embedded with one’s perception of the real world. AR can be used to enhance the real-world experience of the user. Another key difference is that AR can be delivered through smart glasses, headsets and portable devices. So why not through a pilot’s visor?

Is this the solution?

Where VR replaces your vision, AR adds to it. AR devices, such as the Microsoft HoloLens and various enterprise-level “smart glasses,” are transparent, letting you see everything in front of you as if you are wearing a weak pair of sunglasses. The technology is designed for free head movement, while embedding images over whatever the user is viewing.

Current AR Applications

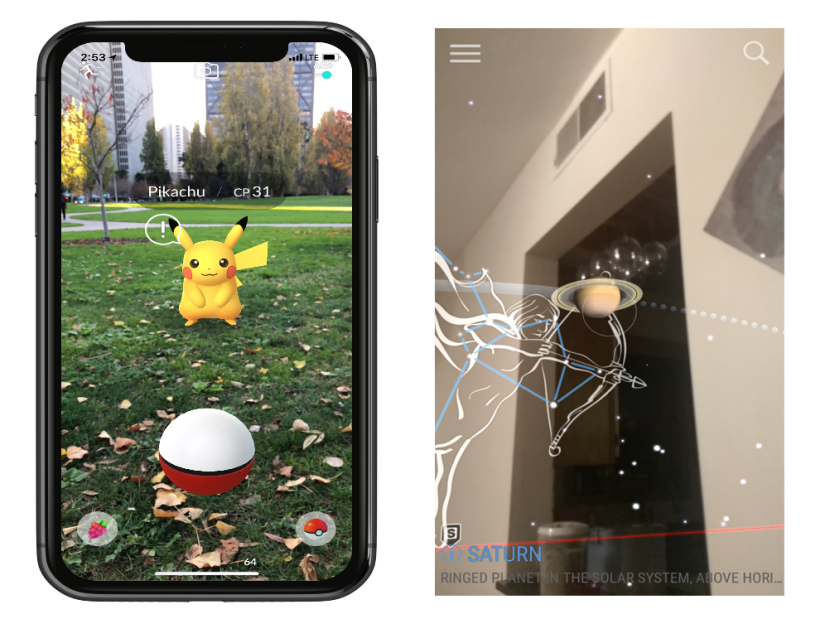

The concept extends to smartphones with AR apps and games, such as Pokemon Go (Fig 1a), which use a phone’s camera to track surroundings and overlay additional information on top of what is displayed on the screen. The SkyView app is another example (Figure 1b).

AR displays can offer something as simple as a data overlay that shows the time, to something as complicated as holograms floating in the middle of a room. Pokemon Go and SkyView synthetically project an image onto a screen on top of whatever the camera is viewing. The HoloLens and other smart glasses, on the other hand, let you virtually place floating app windows and 3D images around you.

Figure 1: (Left) Pokemon Go; (Right) SkyView App.

Current pilot training systems

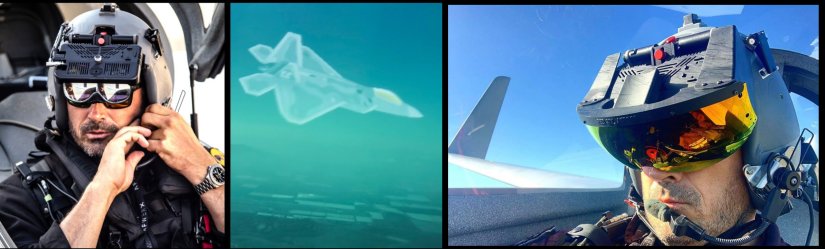

Current technologies allow a pilot to be trained via a real aircraft up in the sky or on the ground in a simulator, which provides VR experience (See Figure 2).

A simulator on the ground provides a medium for pilots to practice manoeuvres, e.g. procedures, Air-to-Air and Air-to-ground combat, refuelling, formation flying and emergencies etc, and is less expensive than flying a real aircraft in the air. However, a pilot misses out on the experience and additional sensory stimulations of actually being in the sky such as G-forces, the ‘seat-of-your-pants’ feelings and all the other facets of actually flying a real aircraft i.e. navigation, meteorological effects, physiological effects, flight dynamics, formation, real world communication, operating aircraft systems and switchology, unplanned flight deviances etc all whilst airborne. Being in the air elicits physiological and psychological muscle memory reactions and experiences.

Physically flying a real plane is different from flying a simulator. Simulators are great at providing procedural training, whereas flying a real aircraft provides the actual physical airborne environment where mistakes are best kept at a minimum.

Figure 2. (Top) Pilot in a real aircraft up in the sky. (Bottom) Pilot on the ground in a simulator.

An F-35 helmet is a great leap forward in technology in providing a pilot with unparalleled Situational Awareness (SA) not before seen; AR can build on this technology (see Figure 3).

Figure 3. F-35 Helmet with enhanced SA information.

Could this be the solution?

My idea for AR is for a student to not just operate in a simulator with a set of VR goggles on and immerse themselves within the VR world but placing that student in a real aircraft and having a VR world superimposed on what they see through their visor.

This would be achieved by synthetically injecting virtual entities into the real world, up in the sky, and have them manoeuvre in relation to both virtual and real airplanes (see Figure 4).

The student is physically flying the aircraft performing all the tasks required, but through a computer program on-board the aircraft, once initiated, various fictitious ground targets, RedAir threats, friendlies or even tanking can be superimposed onto the student’s visor.

Figure 4. Images obtained from the Red6AR website (Red6, 2021).

Whereas VR creates an entirely new synthetic world around you, AR adds images to your real world and surroundings that aren’t there in real life. For instance, showing an aircraft against the actual sky instead of creating both the airplane and the sky in a VR environment. The idea is for pilots on real aircraft in the sky make it seem like they are actually flying with, or against, simulated aircraft.

Outside of the visual range (Beyond Visual Range – BVR), a synthetic target can be generated within the aircraft’s RADAR system and displayed on the aircraft’s RADAR display and as such the student then has to decide on the best course of action to locate, identify and track or even prosecute that target. However, when the target gets Within Visual Range – (WVR), the computer system on board the aircraft will synthetically superimpose the target onto the students helmet visor with the scale varying according to the range of the target, and the student will be required to manoeuvre accordingly - whether it be to prosecute RedAir or ground threats, to formate or work as a package with friendlies or even carry out an Air-to-Air tanking exercise (see Figure 5).

Figure 5. AR Air-to-Air tanking exercise (Red6, 2021).

There’s no substitute for actually flying a real aircraft in the sky. Unlike in a simulator, strapping into an aircraft and actually feeling pressures, e.g. G Forces, possibility of running out of fuel, crashing into the ground, etc., the cognitive load on a pilot’s brain is massively increased when you are actually flying.

Possibility to reality

Through AR pilots still fly the aircraft, whilst at the same time having to utilise and visualise navigation systems, work with air-traffic control, experience weather, understand rough terrains all in a real world airspace environment and at the same time execute time critical mission data and objectives. AR applications will help pilots avoid making mistakes and train them to make the right decisions at the right time in a time-compressed environment.

Fortunately there is a US company (Red6, 2021) already exploring this idea, so a Commercial off-the-shelf (COTS) purchase maybe the solution.

If this is achievable, the value of this efficiency would be felt in all cost domains, such as reduced training burden on squadron personnel and aircraft, as well as minimise the amount of resources, logistics and fatigue both on personnel and aircraft required to achieve a desired outcome. This addition to the training environment will value add to a students’ learning thereby increasing the value of mission training objectives and reduce costs to the ADF.

Comments

Ex back

I really want to thank Dr Emu for saving my marriage. My wife really treated me badly and left home for almost 3 month this got me sick and confused. Then I told my friend about how my wife has changed towards me. Then she told me to contact Dr Emu that he will help me bring back my wife and change her back to a good woman. I never believed in all this but I gave it a try. Dr Emu casted a spell of return of love on her, and my wife came back home for forgiveness and today we are happy again. If you are going through any relationship stress or you want back your Ex or Divorce husband you can contact his email emutemple@gmail.com or WhatsApp +2348071657174

Https://web.facebook.com/Emu-Temple-104891335203341

Services Offered

After reading many testimonials about authentic lottery spell casters and spiritual guidance for financial luck, I felt called to reach out. Dr. Alaska welcomed me with patience, listened to my journey, and performed a lottery spell aligned with positive energy and divine timing. I was also given numbers to play as part of the spiritual guidance.

When I checked the results and realized I had won $3,400,000, I was overwhelmed with gratitude. This experience reaffirmed my belief that spiritual forces, when guided by a genuine and gifted practitioner, can open doors to abundance and transformation.

Spiritual Services Offered:

Love Attraction & Relationship Harmony

Bring Back Lost Lover

Business & Financial Blessings

Court Case & Legal Success

Protection From Negative Energy & Evil Forces

Lottery & Luck Enhancement

Dr. Alaska’s work is rooted in sincerity, spiritual wisdom, and respect for each person’s path. I am truly thankful for this blessing and highly recommend reaching out to those seeking positive change.

Contact: alaskaspellcaster44@gmail.com

DR UYI HELP ME WIN LOTTERY ALL THANKS TO YOU DR UYI

I had to write back and say what an amazing experience I had with Dr Uyi for his powerful lottery spell. My Heart is filled with joy and happiness after he cast the Lottery spell for me, And i won $750,000,000 His spell changed my life into riches, I’m now out of debts and experiencing the most amazing good luck with the lottery after I won a huge amount of money. My life has really changed for good. I won (seventy five thousand dollars)Your Lottery spell is so real and pure. Thank you very much for the lottery spell that changed my life” I am totally grateful for the lottery spell you did for me Dr Uyi and i say i will continue to spread your name all over so people can see what kind of man you are. Anyone in need of help can email Dr Uyi for your own lottery number, because this is the only secret to winning the lottery. Email: (drzukalottospelltemple@gmail.com) OR WhatsApp on +17174154115

MY HEART IS FULL OF GRATITUDE OF WHAT DR UYI DID FOR ME BY HELPI

It’s an honor to share this testimony to the world. Dr UYI you're a blessing to our generation. I have always desired to win lottery some day, at some point I thought it was something impossible, until I came across series of testimonies on how had helped a lot of people win lottery, at first I was so skeptical about it because I haven't seen a things as such in my life, at a second thought I decided to give it a try so I emailed him via his person email, he replied me and instructed me on what to do, after 24 hours he gave me some numbers and I won $2 million just like that. Dr UYI I owe everything for making me the man that I am right. If you need his help below is his contact information, he can help too Email: drzukalottospelltemple@gmail.com OR WhatsApp on +17174154115

THE HACK ANGELS RECOVERY EXPERT // THE BEST RECOVERY EXPERTS

THE HACK ANGELS RECOVERY EXPERT // THE BEST RECOVERY EXPERTS

I cannot express enough gratitude for THE HACK ANGELS RECOVERY EXPERT. They are truly the leading experts in the field and deserve every bit of praise for recovering cryptocurrency wallets when all hope seems lost. Thanks to their intervention, I can finally reclaim peace of mind and focus on rebuilding my financial future with renewed confidence. I highly recommend anyone in a similar situation, details below to get in touch with them right now.

web: https://thehackangels.com

Mail Box; support@thehackangels.com

Whatsapp; +1(520)-200,2320

thank u dr oseremen

𝐀𝐫𝐞 𝐘𝐨𝐮 𝐅𝐞𝐞𝐥𝐢𝐧𝐠 𝐋𝐞𝐭 𝐃𝐨𝐰𝐧 𝐁𝐲 𝐘𝐨𝐮𝐫 𝐋𝐨𝐯𝐞𝐫, 𝐀𝐫𝐞 𝐘𝐨𝐮 𝐎𝐧 𝐀 𝐁𝐫𝐢𝐧𝐤 𝐎𝐟 𝐃𝐢𝐯𝐨𝐫𝐜𝐞 𝐎𝐫 𝐀𝐥𝐫𝐞𝐚𝐝𝐲 𝐃𝐢𝐯𝐨𝐫𝐜𝐞𝐝? 𝐀𝐫𝐞 𝐘𝐨𝐮 𝐒𝐚𝐝𝐝𝐞𝐧𝐞𝐝 𝐎𝐫 𝐃𝐞𝐩𝐫𝐞𝐬𝐬𝐞𝐝 𝐃𝐮𝐞 𝐓𝐨 𝐓𝐡𝐞 𝐒𝐞𝐩𝐚𝐫𝐚𝐭𝐢𝐨𝐧 𝐖𝐢𝐭𝐡 𝐘𝐨𝐮𝐫 𝐏𝐚𝐫𝐭𝐧𝐞𝐫, 𝐀𝐫𝐞 𝐘𝐨𝐮 𝐃𝐢𝐬𝐚𝐩𝐩𝐨𝐢𝐧𝐭𝐞𝐝 𝐈𝐧 𝐖𝐡𝐚𝐭 𝐇𝐞/𝐒𝐡𝐞 𝐈𝐬 𝐃𝐨𝐢𝐧𝐠. 𝐘𝐨𝐮 𝐅𝐞𝐞𝐥 𝐁𝐫𝐨𝐤𝐞𝐧𝐡𝐞𝐚𝐫𝐭𝐞𝐝 𝐋𝐨𝐧𝐞𝐥𝐲 𝐄𝐦𝐨𝐭𝐢𝐨𝐧𝐚𝐥𝐥𝐲 𝐃𝐢𝐬𝐜𝐨𝐧𝐧𝐞𝐜𝐭𝐞𝐝. 𝐅𝐫𝐮𝐬𝐭𝐫𝐚𝐭𝐞𝐝 𝐁𝐞𝐜𝐚𝐮𝐬𝐞 𝐎𝐟 𝐀 𝐁𝐫𝐞𝐚𝐤 𝐔𝐩/𝐄𝐱𝐭𝐫𝐚𝐦𝐚𝐫𝐢𝐭𝐚𝐥 𝐀𝐟𝐟𝐚𝐢𝐫, 𝐋𝐢𝐟𝐞 𝐈𝐬 𝐓𝐨𝐨 𝐒𝐡𝐨𝐫𝐭 𝐓𝐨 𝐁𝐞 𝐔𝐧𝐡𝐚𝐩𝐩𝐲. 𝐓𝐡𝐢𝐧𝐤 𝐀𝐛𝐨𝐮𝐭 that. 𝐘𝐨𝐮 𝐃𝐞𝐬𝐞𝐫𝐯𝐞 𝐔𝐭𝐦𝐨𝐬𝐭 𝐇𝐚𝐩𝐩𝐢𝐧𝐞𝐬𝐬 𝐀𝐧𝐝 𝐋𝐨𝐯𝐞. 𝐓𝐡𝐢𝐬 𝐈𝐬 𝐘𝐨𝐮𝐫 𝐒𝐨𝐥𝐮𝐭𝐢𝐨𝐧⬇️⬇️⬇️

What'sApp 2348153622587

Oseremenspelldr@gmail. com

love spell

What a miracle to have my ex back and i need to share this great testimony… I just want to say thanks Dr ogbo for taking time to help me cast the spell {now my husband},who suddenly lost interest in me after six month of engagement, but today we are married and we are more happier than ever before, I am really short of words and joyful, and i don’t know how much to show my appreciation to you Dr ogbo you are a God sent to restore broken relationship. he deeply enjoy helping people achieve their desires, find true love, getting their ex lovers back, stop abusive relationships, find success, attract happiness, find soul mates and more, contact him today and let him show you the wonders and amazement of his Love Spell System. He deliver results at his best in real spell casting, email: Psychicogboreading@gmail.com OR what’sApp +2349057657558

MY LOTTERY SUCCESS STORY

I acquired my first home at the beginning of the year. To some, lottery players appear ridiculous, while we view it as a chance for an improved future. I'm known as Megan Taylor and I have played the lottery for ten years, yet I haven't won anything substantial. In my pursuit of understanding how to win better, I was advised to consult Lord Meduza, the priest who provided me with the precise 6 numbers that helped me secure the Mega Millions jackpot of $277 million after using his spell powers and following his guidance. Lord Meduza services is top notch, and I believe your dreams aren't finished unless you decide they are. Contact Lord Meduza the priest, and he will assist you in realizing your aspirations..

Email: lordmeduzatemple@hotmail.com

Whats App: +1 (807) 798-3042

Website: lordmeduzatemple.com

real lotto spell caster Dr Dominion

i am super excited this very moment indeed Dr Dominion is truly a good man, i doubted him from the beginning but right now i can trust him with my all because he has put a beautiful smile on my face and change my life from struggle to millionaire, what would i have done without him, just few months ago i was struggling wondering what would my life turn into, now i am here sharing a wonderful testimony of my life, after i explained my whole situation to him he took me as his own daughter and removed my family from shame, he prepared a lottery winning spell for me with true sincerity and gave me a winning number to play and that was how i won that jackpot, Dr Dominion took away my shame, my pain and my worries, what a humble man with a heart of gold, thank you so much i am very grateful for what you have done for me, should you have any interest in giving it a try or going through any difficulties i think he is the right man to talk to and i believe he can help

Email: dominionlottospelltemple@gmail.com

website: dominionlottospell.wixsite.com/dr-dominion

phone: +12059642462

real lotto spell caster Dr Dominion

i am super excited this very moment indeed Dr Dominion is truly a good man, i doubted him from the beginning but right now i can trust him with my all because he has put a beautiful smile on my face and change my life from struggle to millionaire, what would i have done without him, just few months ago i was struggling wondering what would my life turn into, now i am here sharing a wonderful testimony of my life, after i explained my whole situation to him he took me as his own daughter and removed my family from shame, he prepared a lottery winning spell for me with true sincerity and gave me a winning number to play and that was how i won that jackpot, Dr Dominion took away my shame, my pain and my worries, what a humble man with a heart of gold, thank you so much i am very grateful for what you have done for me, should you have any interest in giving it a try or going through any difficulties i think he is the right man to talk to and i believe he can help

Email: dominionlottospelltemple@gmail.com

website: dominionlottospell.wixsite.com/dr-dominion

phone: +12059642462

Best tips to win the lottery using spell

Before I became a lottery winner, I wondered how other lottery winners has done it because I thought it was impossible then, until Chief Ade helped me with his spiritual prayer that works urgently. I was scared to try spell because of what I heard about spell, but I still gave it a try and let Chief Ade do what he knows how to do best. Chief Ade put in work after I provided all the necessary materials needed for the work, he gave me the lottery numbers the next day and instructed me on how to play which I did, I won the 65 MILLION LOTTO MAX jackpot the next day and I was so happy to announce to my wife and children about my wins, I'm Mark Hanley of Newmarket, Ontario. I'm sharing my great experience to you all who are finding it difficult to win the lottery, reach out to Chief Ade on chiefadespellhome@gmail.com OR WhatsApp +234 901 380 6328 you will never regret contacting Chief Ade

THE HELP YOU NEED IN YOUR RELATIONSHIP

"After the breakup, there was a deep sense of loss and a strong desire to reconnect. It felt important to explore every possible avenue to bridge the gap that had formed. Dr. Agbazara brought back my lover in just 72hours after some recommended him to me here online, i will only advise those who are having issues in there relationship or marriages to contact Dr.AGBAZARA TEMPLE through these details via; ( agbazara@gmail. com ) or call him on Whatsapp: ( +234 810 410 2662 and experience his true healing powers like i did.

THIS IS HOW I WON

I am Mrs. Grace Havin from Auburn, Washington, and I was blessed to win the $754,550,826 Powerball Jackpot on February 6, 2024. I give all glory to God Almighty for this life-changing miracle. I also want to sincerely appreciate Papa Adelli, who helped me with the lottery numbers that brought me this incredible win. His spells truly work, and I am living proof. I feel it is important to share my testimony with the world so others can know about his powerful work. If you are interested in lottery spells or any kind of spiritual help, you can contact Papa Adelli Spells, and he will surely assist you.

Email: papaadelli0 @ gmail. com

CALL/WhatsApp number: +2349019250610

love spell

I want to use this opportunity to thank the great prophet for restoring back my home when i taught all hope was lost. Ex lover left for another woman and i met this great spell caster online call Dr Jato and i explain my situation to him, after 24 hours my ex lover came back to me and now we are happy together You can contact this great man for any kind of spiritual work at whatsapp +2348140073965. Or Email: jatolovespell@gmail.com

....

MY LOTTERY WINING SUCCESS STORY

Hi, I acquired my first home at the beginning of the year. To some, lottery players appear ridiculous, while we view it as a chance for an improved future. I'm known as Megan Taylor and I have played the lottery for over ten years, yet I haven't won anything substantial. In my pursuit of understanding how to win better, I was advised to consult Lord Meduza, the priest who provided me with the precise 6 numbers that helped me secure the Mega Millions jackpot of $277 million after using his spell powers and following his guidance.. Lord Meduza services is top notch, and I believe your dreams aren't finished unless you decide they are. Contact Meduza the priest and he will assist you in realizing your aspirations.

Email: lordmeduzatemple@hotmail.com

Whats App: +1 807 798 3042

Website: lordmeduzatemple.com

Testimonials & Reviews of a real spell caster

Fix Your Broken Relationship" It is amazing how quickly Dr. Excellent brought my husband back to me. My name is Heather Delaney. I married the love of my life Riley on 10/02/15 and we now have two beautiful girls Abby & Erin, who are conjoined twins, that were born 07/24/16. My husband left me and moved to be with another woman. I felt my life was over and my kids thought they would never see their father again. I tried to be strong just for the kids but I could not control the pains that tormented my heart, my heart was filled with sorrows and pains because I was really in love with my husband. I have tried many options but he did not come back, until i met a friend that directed me to Dr. Excellent a spell caster, who helped me to bring back my husband after 11hours. Me and my husband are living happily together again, This man is powerful, contact Dr. Excellent if you are passing through any difficulty in life or having troubles in your marriage or relationship, he is capable of making things right for you. Don't miss out on the opportunity to work with the best spell caster. Here his contact. Call/WhatsApp him at: +2348084273514 "Or email him at: Excellentspellcaster@gmail.com

Best Cryptocurrency Recovery Company | Alpha Recovery Experts

Consulting an expert like Alpha Recovery Experts for recovering lost crypto from fraudsters is essential due to the complexities involved in navigating blockchain transactions. Understanding the intricacies of blockchain technology and the legal aspects of recovering stolen crypto assets requires specialized knowledge and expertise. Alpha Recovery Experts possess the skills and resources to delve into the digital footprints left by fraudsters, utilizing advanced technological tools and strategies to trace and recover lost crypto efficiently. By entrusting the recovery process to an expert like Alpha Recovery Experts, victims can benefit from his in-depth understanding of the complexities of crypto scams and his ability to navigate these challenges effectively.

LORD MEDUZA IS THE REASON FOR MY FINANCIAL INCREASE

Praise be to the incredible Lord Meduza, I've never seen or heard of his lottery spell failing, not even once, and that includes my own experience. Every day, I feel renewed blessings after connecting with the Great Lord Meduza, who gifted me my winning six-digit lottery numbers that won me $740,000,000 million dollars by using his magical skills to foresee the outcome. I'm a dad of four, and it's been a few years since I lost my wife to cancer; I miss her terribly. To wrap things up, I really want to thank Great Meduza for being so good at what he does. His guidance and lessons were applied swiftly and precisely, leading us to the best possible result, which we definitely achieved. You can reach him by email: lordmeduzatemple@hotmail.com or check out his website via ( www.lordmeduzatemple.com ) for more information. Stay blessed and know you're in good hands with Lord Meduza.

LOTTERY

Good day viewers, I wish to share my testimony with all of you. I have daily 9 to 5 jobs, but while I work, I try my luck at playing instant Lotto. On this particular day, I decided to seek help online regarding tips for winning the lottery and I saw many individuals testifying about OGBO spells. I reached out to him and informed him that I needed help to win the lottery, and he clarified the process to me stating that after he casts the spell, it will take 48 hours for him to provide me the winning numbers which I accepted. I followed all his instructions and he provided me with the numbers to enter the Lottery. After the draw the following morning I received a notification on the Lottery app on my phone indicating that I was the lucky winner of $273 million on the New Jersey Lottery and I’m here to extend my heartfelt gratitude to OGBO Spell If you seek help in your life, WhatsApp +2348110968476 or Email: Psychicogboreading@gmail.com

HOW I WON THE LOTTERY

Before I became a lottery winner, I wondered how other lottery winners had done it because I thought it was impossible then, until Chief Ade helped me with his spiritual prayer that works urgently. I was scared to try the spell because of what I heard about it, but I still gave it a try and let Chief Ade do what he knows how to do best. Chief Ade put in work after I provided all the necessary materials needed for the work, he gave me the lottery numbers the next day and instructed me on how to play which I did, I won the 65 MILLION LOTTO MAX jackpot the next day and I was so happy to announce to my wife and children about my wins, I'm Mark Hanley of Newmarket, Ontario. I'm sharing my great experience to you all who are finding it difficult to win the lottery, reach out to Chief Ade on chiefadespellhome@gmail. com OR WhatsApp +234 901 380 6328 you will never regret contacting Chief Ade ..

The best thing that has ever…

The best thing that has ever happened in my life is how I won the lottery euro million mega jackpot. I am woman who believes that one day I will win the lottery. Finally, my dreams came true when I emailed DR OGBO. and told him I needed the lottery numbers. I have spent so much money on a ticket just to make sure I win. But I never knew that winning was so easy until the day I met the spell caster online which so many people have talked about that he is very great at casting lottery spells, so I decided to give it a try. I contacted this great Doctor and he did a spell and he gave me the winning lottery numbers. But believe me when the draws were out I was among winners. I won $64.000.000USD. DR OGBO truly you are the best, with these great Dr you can win millions of money through the lottery. I am so very happy to meet this great man now, I will forever be grateful to you dr. Add him up on Email:psychicogboreading@gmail.com and you can as well text him on WhatsApp +2348110968476

HOW I WIN BACK MY EX BACK

The marital crisis I was going through came to and end when Dr Adoda got involved. Just like every other couples out there, we had our differences and I ensure I did my best to lead her right, provide for her and my kids and also protected them. We both made sacrifices to ensure we survived the union until she started acting up and deliberately had so many fights with me. The home became unpleasant for everyone else including my children. She was being manipulated by a friend who had been divorced and wanted to also ruin my marriage. I had to contact {http://dradodalovetemple.com} and he responded and did his best to end what was going on within us. He restored the love and connection between us and I can tell now that whatever illusion my wife was under has been taking away and she is a better Wife and a mother now. The result of what Dr Adoda did for me that manifested after 48HOURS was what amazed me. I and my household remain grateful. I appeal to anyone who needs help to fix their relationships and marital problems to Visit Adoda website because the solution is sure. http://dradodalovetemple.com

Herbal Cure for Herpes and HPV

God bless Dr. Uyi for his marvelous work, I was diagnosed of herpes for few years and I was taking my medications, I wasn't satisfied i needed to be cured completely from herpes, I searched about some possible cure for herpes i saw a comment about Dr. Uyi, how he cured HERPES VIRUS and HPV with his herbal medicine, I contacted him and explain to him, he guide me I asked for solutions, after a few discussion and i have done what i was required to do, he sent me the medicine. I took the medicine as prescribed by him, few days later I went to the hospital for a test when the test was negative, i am now perfectly ok. Dr. Uyi truly you are great, If u need his help? contact him via email: uyiherbalmedicationcenter@gmail.com or WhatsApp: +2348160813984

VR Gaming World

What if the mindset is very familiar in Australia? I think it really is! I see parallels with gaming digital platforms where you can invest and get rich. So I downloaded the app on https://golden-crown-au.net/install-app/ platform and explored bonuses, deposits and user flow. It becomes obvious that people will more often appreciate predictability combined with efficiency. In a similar way, knowing what to expect from betting, you can choose the game that you can well correspond to.

Michigan Lottery Player Wins $1 Million Powerball Prize.

I am a Michigan lottery player, and before my breakthrough, I always wondered how others managed to win because it felt impossible for me. Everything changed when I connected with Dr. Alaska. At first, I was hesitant because of things I had heard about spiritual work, but I decided to give it a try. He carried out a powerful prayer and guided me through the process step by step. After I provided the required materials, he gave me special lottery numbers the very next day and explained how to play. I followed his instructions carefully, and to my greatest surprise, I won a $1 million Powerball jackpot the next day. I was overwhelmed with joy and couldn’t wait to share the good news with my wife and children. My name is Brian Bauer from Washtenaw County, and this experience has truly changed my life. If you’ve been struggling to win the lottery, you can also reach out to Dr. Alaska at alaskaspellcaster44@gmail.com or WhatsApp +233 277 283 626. You may be surprised at what can happen.

love spell

I have hard read many testimonies about Dr Jato spell caster how he has help many people of relationships issues, power-ball, Mega millions and I contacted him and it doesn't take much time that he help me cast his spell and gave me the winning numbers to play and assure me that I will win the powerball jackpot and all that he said came to pass and today I'm rich through his spell he used to help me win the jackpot. If you have ever spent money on any spell caster and couldn't see any results.... this is the only way to win the lottery and the best way!!via his Email jatolovespell@gmail.com or whatsapp/call +2348140073965

FB Page: https://www.facebook.com/share/1D5myk4Bxb/

Youtube Page: https://www.youtube.com/@JatoLoveSpell

.

A SUCCESSFUL LOTTERY SPELL CAST BY LORD MEDUZA

Wishing good fortune to everyone who comes across this comment.. I went bankrupt last year due to failed business ideas. I used to enjoy playing the lottery for fun but as my situation worsened financially, I sought help to win big this time and found a man called MEDUZA, who has assisted numerous individuals worldwide with their financial issues. This man prepared a powerful spell for me that enabled him to get the secrete lucky numbers for me, I played the game with the numbers and to my astonishment, I discovered that I had won $259 million Jackpot after 2 weeks just has he said; Today, I’m now free of debt and I own different investments globally. Without Meduza, I wouldn’t have made it through the tough times I experienced last year. Truly, MEDUZA is a divine presence among us. If you are currently struggling in aspect of your life, reach out to MEDUZA through the below information's.

Whats-App: +1 807 798-3042..

Email: lordmeduzatemple@hotmail.com

Website: www.lordmeduzatemple.com

Don’t give up on your relationship just yet.

Breakups and divorce are not always the final answer when love still exists. If you truly want to reunite with your partner and rebuild what was lost, there is still hope. Dr. Alaska, a highly respected love spell specialist has helped me restore peace, understanding, and deep love back in my Marriage. Today, I’m happier than ever!! I remember he also told me he has helped a lot of persons in attracting financial breakthroughs like lottery wins, overcoming fertility struggles and becoming pregnant, winning difficult court cases, securing job promotions, removing bad luck, and bringing success and stability back into their lives. You can reach him at alaskaspellcaster44@gmail.com and take the first step toward transforming your life. Good luck!

herpes cure

I am so happy, i never believe i will be this happy again in life, I was working as an air-hoster ( cabby crew ) for 3years but early this year, i loose my job because of this deadly disease. called Herpes virus (HSV), I never felt sick or have any symptom, till all workers were ask to bring their doctors report, that was how i got tested and i found out that am HSV positive that make me loose my job, because it was consider as an STD and is incurable disease, i was so depress i was thinking of committing suicide, till i explain to a friend of mine, who always said to me a problem share is a problem solved, that was how she directed me to Dr OGBO, that was how i contacted him and get the medication from this doctor and i got cured for real, I just went back to my work and they also carry out the test to be real sure and i was negative. Please contact this doctor if you are herpes positive or any STD diseases his email is: drogboherbalhome@gmail.com or you can call or whatsApp his mobile number on +2348110968476

HOW I WON THE LOTTERY WITH LORD MEDUZA HELP

Nothing is impossible if truly you believe you can achieve it. I've been attempting to expand my wine business in my hometown in White Plains. For the past 7 years, I do play the lottery in my spare time, but on this blessed day I came across an article about Lord Meduza's spell to win the lottery.. I contacted Lord Meduza to see if it would work and after I agreed to his terms and conditions for completing the task for me, he performed his magical powers for me and within 48 hours, he provided me with the numbers I needed to play the lottery, and I followed his directions. I found out at the store that I was the winner of a $105 million jackpot. For a moment I felt uncertain but I looked again and I saw it was real. As I speak to you, My level has been elevated from grass to grace and I've come to know that life is more spiritual and with the Lord Meduza spell, you can actually win the lottery. Lord Meduza contacts are listed as follows;.

Whats app: +1 807 798 3042

Email: lordmeduzatemple@hotmail.com

Website: www.lordmeduzatemple.com

RIGHT WAY LAW RECOVERY SERVICE

I was impatient to carry out necessary research but I really wanted to jump on the crypto trading and investment buzz. Unfortunately for me, I invested 75,700 GBP worth of bitcoin with a fraudulent company. I was happy to watch my account grow to 214,575 GBP within a couple of weeks. But I didn't realize I was dealing with a scam company, until I tried to make an attempt to withdraw. I made a withdrawal request, and noticed my account was suddenly blocked for no apparent reason.

I tried contacting customer support, but all to no avail. I needed my money back at all cost, because I could not afford to let it go. So I tried all possible means to make sure I recovered my scammed bitcoin. I did a lot of online search for help, and tried to see if there were other people who had any similar experience. I stumbled upon a cryptocurrency forum were a couple of people mentioned that they had been through the same process but were able to recover their lost cryptocurrency, funds with the help of right way law firm. So I file a report on to Recovery service on Text, Email : rightwaylawrecoveryservice@gmail.com and call and WhatsApp +1(479) 849-4201, Telegram +1 513 602 3179 and they were able to help me get back all my lost funds within few days.

HOW LORD MEDUZA ASSISTED ME TO WIN THE LOTTERY JACKPOT

When the Bible states we are gods of the earth, I felt this truth during my encounter with Lord Meduza, the spell master. I’m Denzel Chau and I’m here to present the individual who transformed my life from poverty to becoming a multimillionaire Jackpot victor. I reached out to Lord Meduza for assistance with winning numbers and he successfully helped me after casting a lottery spell for me. I utilized the numbers to enter the Jackpot lottery and I was announced as the winner of $368,000,000. Acquiring the numbers from Lord Meduza took 72 hours and I want to express my gratitude publicly to him for his remarkable service to humanity and for deciding to assist me during my life's lowest moment. Not everyone is meant to win the lottery, but with the assistance of Lord Meduza, you can achieve it.

To reach him today, drop a message to his email: lordmeduzatemple@hotmail.com or Phone +1 807 798 3042. To learn more, go to his webpage: www.lordmeduzatemple.com and you'll appreciate it later.

How to recover bitcoin

My name is Ellis Byrne, and I’m from Kent in the UK. I was one of several individuals who fell victim to a cyber scam that resulted in the loss of my crypto assets. In my case, I lost around £283K worth of Ethereum. It was an incredibly distressing experience, and at the time, I had little hope of ever recovering what I had lost.

Fortunately, things took a positive turn when I reached out to Morphohack Cyber Service, a wellknown cybersecurity and asset recovery company. From the outset, their team was highly supportive, professional, and reassuring during what was a very difficult period for me. They worked diligently to trace the movement of my funds and guide me through the recovery process step by step.

What makes my story particularly striking is just how hopeless the situation initially felt. Losing such a substantial amount of crypto was devastating, and I genuinely believed it was gone for good. However, with Morphohack’s expertise and persistence, I was able to recover a significant portion of my assets. I contacted Morphohack through their official email address at Morphohack@cyberservices.com

LOTTERY

Good day everyone who came across this comment Dr OGBO spell caster , My dreams finally come true, I saw people online sharing about his winning numbers, when i told him I want to win the lottery because of the post i saw online and his winning numbers, He assured me not to get worried I am in the right place, at first I was skeptical , All i did was to give it a try And he ask for my necessary information which I provided for him, He did a spell and gave me the winner lottery numbers ,After 7 hours ,He give me the instructions on how to play and when to play it which i did , And to my greatest surprise i was among the winners, I won $100 million. when it comes to winning the lottery Dr OGBO is the best you could ever found ,I still can't believe I finally Win after so many years. Thank you so much for your help. Add him up on Email psychicogboreading@gmail.com Or WhatsApp him +2348110968476

WHAT CAN I DO TO RECOVER MY STOLEN BITCOIN?

WHAT CAN I DO TO RECOVER MY STOLEN BITCOIN? CONSULT // GEO COORDINATES RECOVERY HACKER

I have read a lot of stories about people losing money to investment scams. I too have been a victim of this scams. I lost about $700 in Bitcoin some months ago, I searched around and tried to work with people ,unfortunately I was scammed as well. This happened for months until I came across GEO COORDINATES RECOVERY HACKER. I would first want to thank GEO COORDINATES RECOVERY HACKER for their professional support and unshakable devotion, which resulted in the successful recovery of my money. I would highly recommend them to anyone in need of such services. Details for getting in touch: Email:

Email: geovcoordinateshacker@gmail.com

Website; https://geovcoordinateshac.wixsite.com/geo-coordinates-hack

Telegram:@Geocoordinateshacker

WhatsApp; +1 ( 579 ) 397 1584

Amazing Lottery Spell Experience!

I’m at a loss for how to express this. My sister welcomed twin daughters in July and I found myself in a difficult financial and emotional state. I am known as Celta Vallecano and during my quest to find quick cash, I came across an advertisement claiming that Lord Meduza provides precise numbers to those genuinely selected for freedom from financial struggles through his genuine spell. I felt compelled to take a chance, and ever since reaching out to Lord Meduza, my life has significantly improved. As I write this, I am now a winner of €221 Million Euros after adhering to all the guidance and utilizing the MEGA MILLIONS numbers provided by Lord Meduza. I want to express my high gratitude for the fact that assistance is indeed possible to get regardless of how difficult your circumstances may seem. I highly recommend him in case you require any assistance, simply Whats- -App +1 807 798 3042 or Email; lordmeduzatemple@hotmail.com and for more inquiring visit: lordmeduzatemple.com

Crypto Services

Discover Vcarecrypto.com!

Secure, recover, and grow your crypto with expert precision.

Our Full Services:

Digital Asset & Wallet Recovery (lost passwords, seed phrases, private keys)

Stolen/Lost Funds Recovery & Blockchain Forensics

Damaged/Corrupted Wallet Repair

Advanced Seed Phrase & Private Key Reconstruction

Safe Custody Vaults & Asset Protection

Phishing & Hacker Prevention

Social Media Account Recovery

Global Lead Generation & Premium Databases (Crypto/Forex Investors, Country-Specific Leads)

Startup Growth Support & Managed IT Solutions

Your trusted all-in-one partner for crypto recovery, security, and business growth.

Visit vcarecrypto.com today!

Telegram: @vcarecrypto

#CryptoRecovery #Bitcoin #Ethereum #CryptoSecurity #Blockchain

Add new comment